Process of capturing and reproducing realistic, real-world objects for any virtual environment is complex and time-consuming. Imagine using a conventional camera with a built-in flash from any mobile device or off-the-shelf digital camera to simplify this task. A global team of computer scientists have developed a novel method, that replicates physical objects for the virtual and augmented reality space by using a point-and-shoot camera with a flash, without the need for additional and often expensive supporting hardware.

‘To faithfully reproduce a real world object in the VR/AR environment, we need to replicate the 3D geometry and appearance of that object. Traditionally, this has been either done manually by 3D artists, which is a labor-intensive task, or by using specialized, expensive hardware. Our method is straightforward, cheaper, efficient, and reproduces realistic 3D objects by just taking photos from a single camera with a built-in flash.’, says Min H. Kim (Associate Professor of Computer Science at KAIST, South Korea and lead author of the research). Kim and his collaborators – Diego Gutierrez (Professor of Computer Science at Universidad de Zaragoza, Spain and KAIST Ph.D. students Giljoo Nam and Joo Ho Lee, will present this new work at SIGGRAPH Asia 2018 in Tokyo, from 4th December to 7th December. The annual conference features the most respected technical and creative members in the field of computer graphics, interactive techniques and showcases leading edge research in science, art, gaming and animation among other sectors.

Existing approaches for the acquisition of physical objects require specialized hardware setups to achieve geometry and appearance modeling of the desired objects. Those setups might include a 3D laser scanner, multiple cameras or a lighting dome with more than a hundred light sources. In contrast, this new technique only needs a single camera to produce high quality outputs. ‘Many traditional methods (using a single camera) can capture only the 3D geometry of objects, but not the complex reflectance of real-world objects, given by the SVBRDF.’, notes Kim. SVBRDF is key in obtaining object’s real-world shape and appearance. ‘Using only 3D geometry cannot reproduce the realistic appearance of the object in the AR/VR environment. Our technique can capture high-quality 3D geometry as well as its material appearance, so that the objects can be realistically rendered in any virtual environment.’

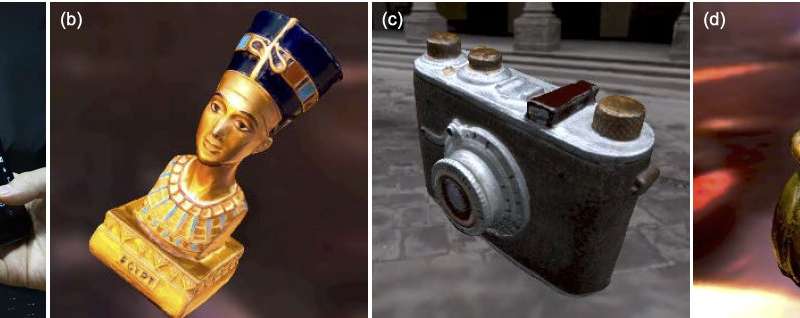

Researchers group demonstrated their framework using a digital camera, Nikon D7000 and the built-in camera of mobile phone ‘running on’ Android, in a series of examples in their paper ‘Practical SVBRDF Acquisition of 3D Objects with Unstructured Flash Photography.’ The novel algorithm, which does not require any input geometry of the target object, successfully captured the geometry and appearance of 3D objects with basic, flash photography and reproduced consistent results. Examples that were showcased in the work included diverse set of objects that spanned a wide range of geometries and materials including metal, wood, plastic, ceramic, resin, paper and comprised of complex shapes like a finely detailed mini-statute of Nefertiti.

In future work, the researchers hope to further simplify the capturing process or extending the method to include dynamic geometry or larger scenes, for instance.

Source: Association for Computing Machinery, New York, USA